You train a model on a laptop. It hits 95% accuracy on your test set. Then you try to run it on the target microcontroller and discover it needs three times the available RAM, exceeds the power budget, and takes twice as long to infer as your timing deadline allows. Back to the drawing board.

This cycle — train, compress, fail, repeat — is common precisely because the hardware constraints are treated as a final filter rather than a design input. The result is slow iteration, fragile integration, and PoCs that look promising on paper but collapse under real deployment conditions.

We wanted to explore whether a more systematic workflow was possible, so we built an internal proof of concept called MLForge.

MLForge is a constraint-driven pipeline that connects AI-assisted model design directly to embedded targets — from a YAML specification through to hardware-validated firmware.

In this proof of concept we implemented the pipeline using Zephyr RTOS, allowing us to move quickly from QEMU simulation to real hardware.

This is not a product. It is a capability demonstration — built and iterated under real embedded constraints — designed to show that repeatable, traceable embedded ML is achievable today.

Why Embedded Machine Learning on Zephyr?

Embedded ML allows devices to learn patterns from sensor data rather than relying solely on hard-coded rules. It underpins wake-word detection, anomaly detection, sound classification, and predictive monitoring across a range of industries.

Zephyr is a lightweight, open-source RTOS designed for microcontrollers. It manages timing, tasks, and hardware access in constrained environments and provides a production-grade firmware foundation. It is a natural platform for embedded ML work, but bringing the two together properly requires more than simply porting a model.

The real challenge lies in designing a workflow that respects hardware limits from the outset.

The Problem We Set Out to Solve

Embedded ML is not just about model accuracy. It must operate within a complete system that includes:

Strict RAM and flash limits

Deterministic inference timing

Reproducible builds

Validation on real hardware

What We Built

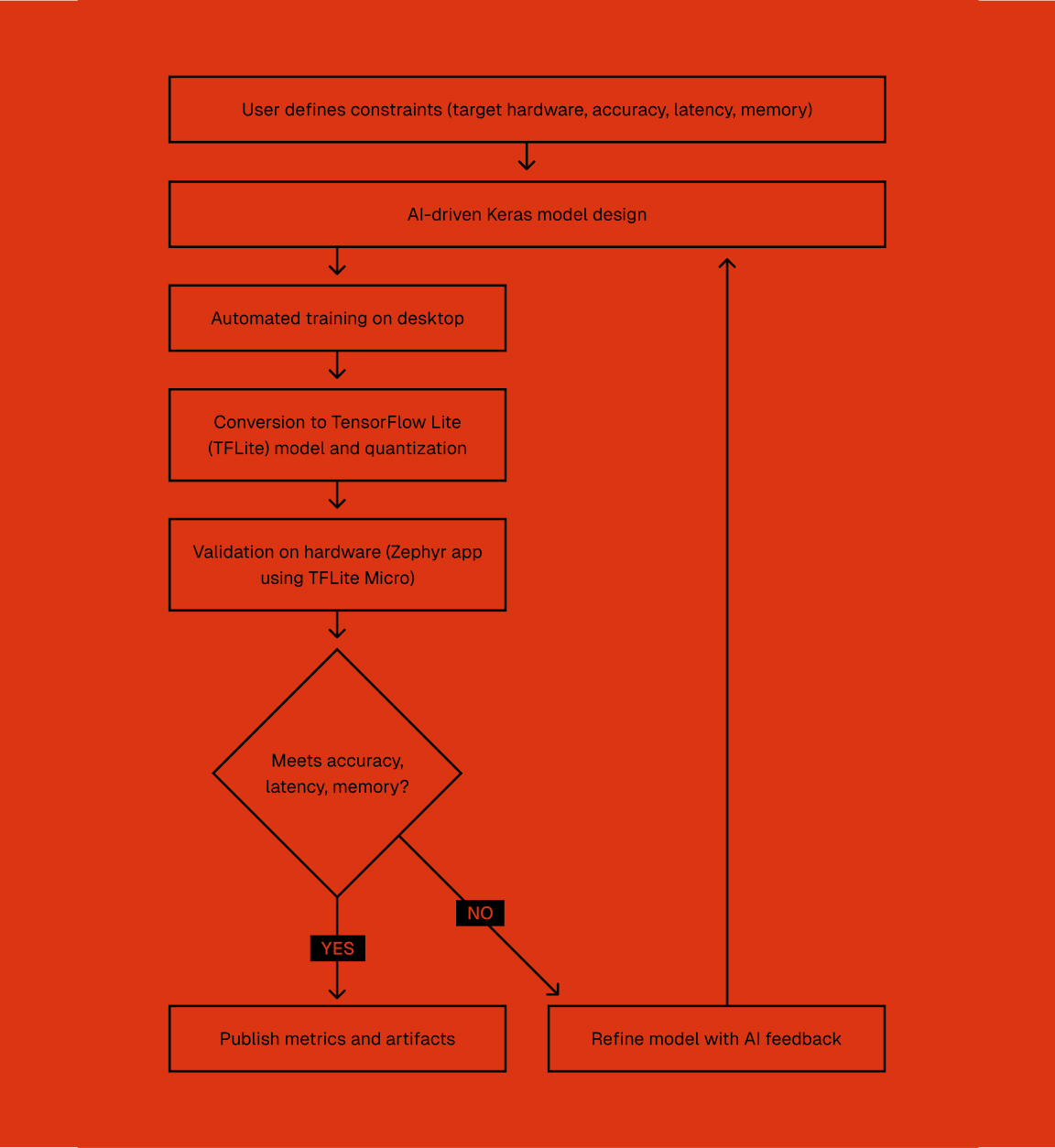

MLForge is a configuration-driven pipeline that begins with a YAML contract defining hardware limits, execution mode, and accuracy targets. From there, it works toward a model that satisfies those constraints.

At a high level: constraints go in as YAML, and validated firmware comes out the other end. Between those two points, an AI agent iterates on model architecture, the model is quantised and compiled, and each candidate is executed on Zephyr — first in QEMU simulation, then against real hardware — with measured results fed back into the next iteration.

The process is:

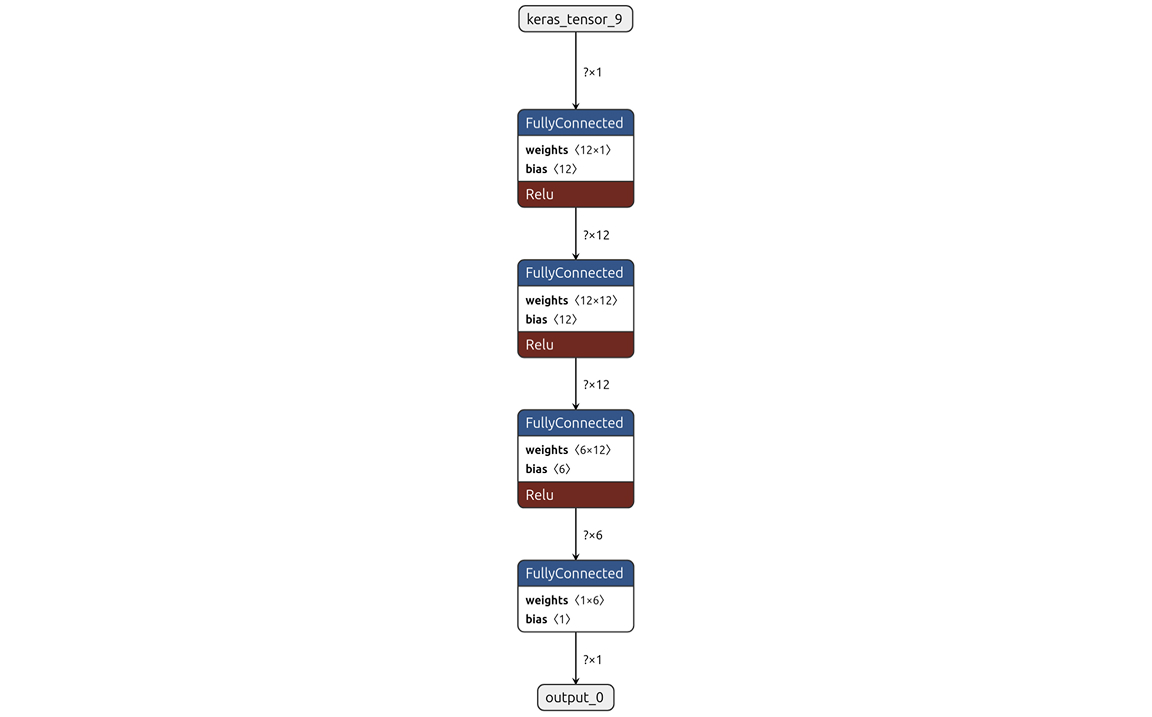

An AI agent proposes a Keras model architecture based on the specified constraints.

The model is trained and quantised using TensorFlow and converted to TFLite (int8).

It is built and executed on Zephyr, starting in QEMU and progressing to hardware-in-the-loop validation.

Metrics and artefacts are captured for each iteration.

The agent receives measured feedback and adjusts the architecture accordingly.

What We Measured

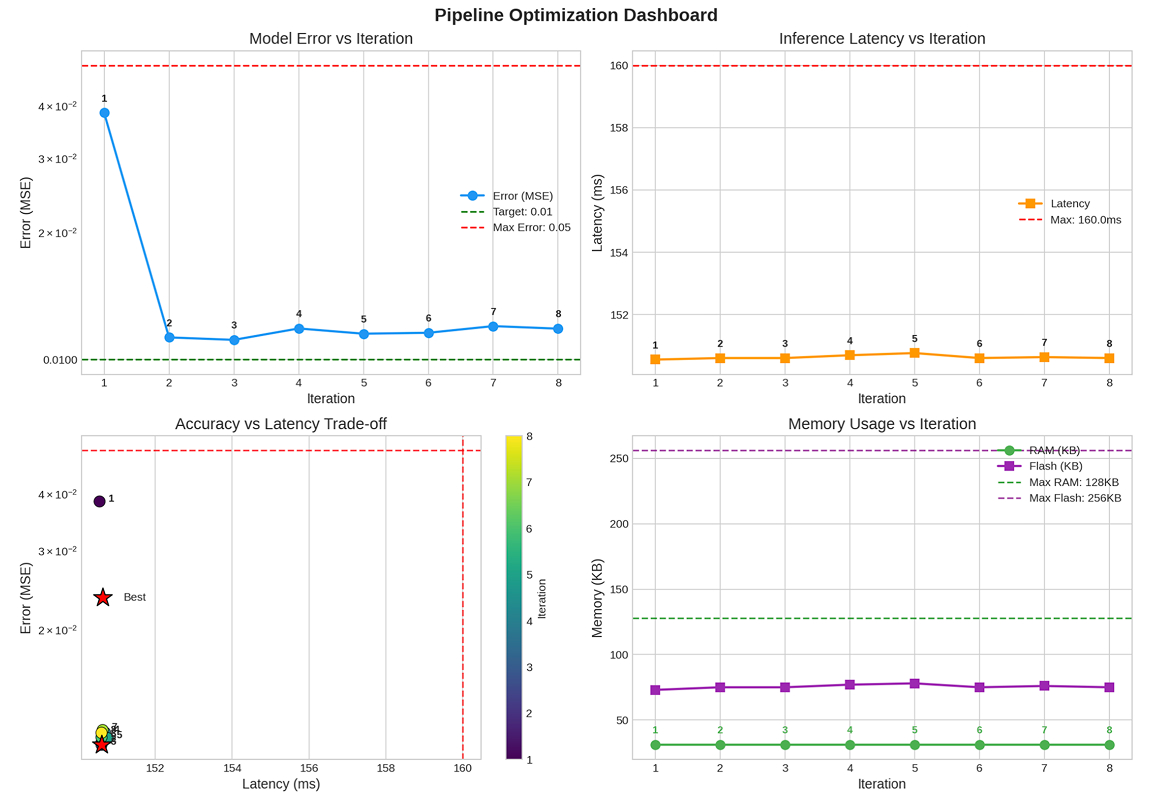

Because embedded systems operate within hard boundaries, we tracked the metrics that matter on real devices:

RAM and flash consumption against hardware limits

Inference latency against a defined deadline

Model error (MSE — Mean Squared Error) across iterations

Capturing these metrics per iteration makes trade-offs visible. Instead of discovering problems late in integration, we can see convergence — or divergence — in real time.

Why Zephyr Specifically?

Zephyr scales cleanly from QEMU simulation to physical hardware without requiring changes to the firmware stack. This reduces the uncertainty between “works in simulation” and “works on the device.”

Other RTOS options — FreeRTOS, Mbed OS, bare-metal approaches — are viable in many contexts, but they lack Zephyr’s native support for this kind of simulation-first, hardware-validated workflow. The combination of QEMU integration, a consistent build system, and broad board support made Zephyr the natural choice for an optimisation loop that needs to run many iterations cheaply before committing to hardware time.

The same build pipeline runs in both environments, which gives the optimisation loop practical value. Each iteration is validated against the same runtime conditions that would apply in production.

What This Demonstrates

The scope of the pipeline is intentionally modest, but it reflects a particular approach to embedded ML: treating the model as one constrained component within a wider embedded system, rather than as an isolated artefact.

The emphasis throughout is on:

Reproducibility

Traceability of configuration and outputs

Validation against real hardware limits

Iterative optimisation under constraint

What Comes Next

Taking this work beyond proof-of-concept would involve:

Replace synthetic data with device-level datasets

Extend constraint checks (power, thermal, scheduler impact)

Add board-specific optimisations and accelerators

Integrate into CI for end-to-end reproducibility

It is also worth noting that while this proof of concept is Zephyr-specific, the underlying methodology is not tied to any particular RTOS. The same constraint-driven, AI-assisted optimisation loop could be extended to Linux-based embedded targets — Yocto-based platforms, Raspberry Pi, or similar higher-powered devices running a full OS stack. On those targets, the constraints shift (more headroom for RAM and compute, but new considerations around power, thermal management, and deployment lifecycle), but the core discipline of treating hardware limits as first-class design inputs remains equally valuable. A natural next step would be to validate the approach across both RTOS and Linux environments within a single pipeline.